No. Look at other videos. The stop button is on the screen.

Here are screenshots for the top 3 vids I watched: https://imgur.com/a/nn8BpBt

Dead man switch. Don't want someone stealing a car.

Oh hm. Good eye

Yeah might work similar to how 'summon' does, where you have to hold down the button. Otherwise it assumes a human might not actively be monitoring it and stops.

It's possibly just another quicker and more direct way to trigger a pause/emergency stop than fumbling with some small onscreen buttons while jostling around in a vehicle that may be doing something erratic. People are shitting on them for having this but I don't see what the big deal is vs the options they're already known to have. They're monitoring and it's likely the safest/quickest way for them to act if it came down to it. Either way, zero interventions across any of the rides today despite a couple awkward moves it recovered from both times.

Per the manual https://www.tesla.com/ownersmanual/modely/en_pr/GUID-2CB60804-9CEA-4F4B-8B04-09B991368DC5.html FSD automatically disengages when a door is opened. I am positive this would also stop the specialized robotaxi software that is being run in these vehicles.

It’s in case they need to tuck and roll

2 of the three people seen in the screenshots OP has posted, rest their thumbs besides the button so I doubt it's a dead man switch.

I agree 100%! I don’t blame them for having a reprogrammed button optimized for their role in the first rides.. I just think it’s an interesting observation.

That's an interesting theory, and would work.

It’s not reprogrammed. If you open a door during FSD it’ll disengage.

And then who's driving?

https://x.com/teslapodcast/status/1936922325808562343?s=46&t=DJmv2YLmRbUb4ElSRE6fow At timestamp 15:10-15:12, a car cuts in front of the robotaxi and the Safety Monitor guy puts out his hand to hover over the buttons on the screen. Why would he do that if the door button was a safety switch?

Yeah, that’s a good observation

This exact driver signals to the right and then the car pulled right in to a spot…I thought I was imagining things but now starting to wonder

If robotaxi still needs a human in the passenger seat, why not just put them in the driver's seat instead??

Because the door button probably completely shuts down FSD. He was about to press the "stop in lane" button which just tells the car to do something instead of being "safety critical intervention" or whatever you want to call it

Ejecto seato cuz

Optics

It seems like a rough-around-the-edges solution. Curious, what does Waymo do? Are there any downsides to this? Seems rough but reasonable.

These cars are most likely teleoperated. This "safety driver"stuff is probably theater. A true safety driver would have a gaming console in hand, like the Titan sub did. If the software works, why isn't it first on M3 and MY as was promised in, what was it, 2019? It has a steering wheel, brakes, and a driver that can take over, vs bail out.

It's a *very* Tesla solution: Create custom vehicle functions by leveraging existing hardware via custom software.

Because this is purely a temporary thing for testing

That button doesn't normally work over certain speeds. It is almost certainly reprogrammed, given the way they are ready to press it.

Tip the hat

Not really a dead man switch though cause if the guy died of a brain aneurysm the car would just keep chugging along lol.

Yeah, move fast and break things. Just seems like a spot you don’t want to take those risks but I guess they worked from the top down.

Waymo tends to just react well IMO, e.g. https://x.com/dmitri_dolgov/status/1930337733719011608?s=46

Stop fsd and what?

It’s geo fenced in Austin , you’re behind

I’ve actually come up with another thought. The safety passenger doesn’t want to scare riders so that specific button could handle the situation more gracefully than what we are thinking. It’s very possible that button does more than we expect in a normal Tesla. Software safety features. Now I’d like to know what happens if you try to press the rear passenger door button.

It just slows down to a stop.

They could have had a safety driver in the seat to actually take control. I'm guessing Elon vetoe'd that idea and the on-screen overrides and door opening were the overrides.

What if the button just triggers a remote operator to watch/operate the car?

It’s not teleoperated. Those that have used it know that the software works this well in consumer cars.

Ummm. It does work. I routinely use it around town and have take a number of trips over 5 hours with no interventions. I’m guessing Tesla doesn’t have a person back at headquarters driving my car for me.

exit the vehicle, this is not a drill!

It's probably both. Buttons onscreen plus door switch in a true emergency

Wait yeah I'm stupid, if they had to hold the button down for hours it would suck. Might be for additional security or something.

A driver in the driver's seat for a \*driverless car launch\*. Genius. What are you gonna call it, Uber? The entire point of this day is to launch \*driverless\* taxis. Driverless. Doesn't matter who said what or who vetoed what. The very idea of the product launch is to not have anyone seated there.

It's probably a hidden emergency 'Karen Eject' button, so they can eject any Karen passengers

The main issue is this: in Model Y cars, the physical emergency release for the rear doors is hidden under the door. It’s not exposed or easily accessible from the outside, and most users aren’t aware of it. Many people who know about it attach something to the hidden trigger to make access easier. If the car malfunctions in some way, someone who doesn’t know about this could get trapped inside, which I think is a very dangerous situation.

Waymo don't need such precautions because it is already able to drive reliably at level 4 autonomy. That also means no safety driver. Waymo has already provided millions of rides. Unlike supervised autonomous driving, there are important differences in user expectations between a taxi passenger and a driver supervising an autonomous vehicle who can compensate for system limitations. Pre-mapping is actually critical to safe and reliable robotaxi services. It is one of the core functionality that enables Waymo to acheive L4 autonomy. It is not just because they have radar and Lidar. In China, all robotaxi operators uses pre-mapping.

*Marketing

Why would you disengage FSD when the door opens? Would it not be wise for the car to quickly pull over?

[removed]

So now you've got a rapidly slowing car _with a door open_. This is insane. It's unsafe and everyone can clearly see it.

These are Model Ys. They have steering wheels. Having someone in the driver seat would surely have been safer, but not great for optics...

You obviously don't own a Tesla and don't know anything about them. A Tesla will not allow you to open a door while moving unless you use the emergency release.

Do you think the people using the taxi will know this as well? The door doesn't open, or you use the door open button as a janky hack to stop? Is any of this acceptable?

Why would you open the door of a moving vehicle? It just makes sense for it to stop and let you out if you start spamming the door open button... Theres a stop button on the screen as well

Dude, why are you even commenting when you don’t know how it works?

good catch!

You have zero evidence that it opens the doors. It's the same button that denies the door from opening if the car sees a bicycle approaching, there is no chance the door is flying open if it's pressed with the vehicle in motion. This whole "unacceptable" nonsense tantrum you're throwing is based on bonkers speculation over something that literally doesn't matter and is hardly different than what Waymos looked like with initial safety monitors. The reality is this is a driverless system that ultimately required zero human intervention from its open at 2pm to its closing at midnight on its first day operating. You might want to try toning down the mental gymnastics.

Better to be hit while inside the Tesla than jump out of the car before an accident. Trying to open the door just disengages the FSD and the car will come to a slow stop, then the car will unlock and allow you actually open the door. You're being super dramatic.

My guess is that it could also be a panic button for the employee's safety and of course also having an e-stop (a more extreme version of what the employee can do from the screen) makes perfect sense. After all Musk said they would be "super paranoid" about safety...

The safety monitor is required to be there under the terms of their insurance. EDIT: wrong word.

It's required by the operating license.

I think we're over thinking it. Dirty Tesla filmed a situation where the Safety Monitor reached for the Stop In Lane button on the centre screen when a car ahead swerved back into the lane. He didn't need it in the end as the Robotaxi handled it unphased.

The operating license specifically requires them to be in the passenger seat?

IIRC, the car will engage the parking brake if you open the door while moving. That would probably count as emergency stop for a robotaxi.

1) wouldn’t a car cutting you off/potential collision be an potential appropriate time for critical intervention? 2) disabling fsd isn’t helpful here? Braking would be? 3) honestly my current guess right now is that’s the equivalent of the ‘oh shit’ bar.

>a number of trips So, not all of them? Because “all of them” is how many intervention-less trips a driverless cars needs to be able to do. A ten year taxi could make well over 1.5 million trips. Have you gotten to 1.5 million trips in a row without issue?

We should assume that the button has been reprogrammed to something like "stop immediately" for these trials.

> It’s not reprogrammed. And you know this how? It would make sense for them to do it.

Do you think that the passengers will read the manual before using this sort of thing, or just assume stuff?

I would assume they’ve set up the Robotaxi software to show instructions prior to getting in. They don’t need to read the manual, as this isn’t something we ever would do in our own Teslas.

I'd say a stop button on a bit of new tech is warranted. I'm also surprised that waymos don't have them either. There's videos from passengers trapped in the vehicles going round in circles (not today). There's a few videos online from today about strange behaviour when steering in an empty lane. If that was a human driver, I would absolutely be telling them to stop and would get out.

This!

Emergency brake.

This is the "I don't trust the robot driving my car so I need to hold onto this for dear life" handle.

The door button invokes The Kobayashi Maru Maneuver.

Sounds great in theory but seriously think this through. The car is about to have a massive accident and he opens the door???

> And you know this how? And how do you know it *is* reprogrammed? You're engaging in [Negative Proof Fallacy](https://www.qcc.cuny.edu/socialSciences/ppecorino/PHIL_of_RELIGION_TEXT/CHAPTER_5_ARGUMENTS_EXPERIENCE/Burden-of-Proof.htm) ... *The burden of proof is always on the person making an assertion or proposition. Shifting the burden of proof, a special case of argumentum ad ignorantium, is the fallacy of putting the burden of proof on the person who denies or questions the assertion being made. **The source of the fallacy is the assumption that something is true unless proven otherwise.***

I believe this is likely it in the early days

I didn't make any claim. You did. 🤷🏻♂️

It’s the secret door open button that creates rainbows.

Unless they've modified the parameters, the door button is disabled until the car is essentially stopped anyway. Even if that's the case, it seems like it would be a reasonable thing to have during the initial rollout/testing. It seemed pretty darn capable from the videos I saw, which isn't surprising, I use the latest consumer build daily and intervene maybe once or twice a week. None of my interventions have been safety critical in over a month.

You said the button has been "reprogrammed" into doing something else. That's a claim. You provide no proof. My claim is the *default operating functions of a Tesla* \- normal FSD behavior would kick in if you open the door, it will bring the car to an immediate stop as safely as it can (I mean, just read the Tesla user manual if you don't believe me). So my claim is the *default* state of a Tesla. You are saying it's something else, with zero proof of that fact. You can keep replying here, but everyone can see how ridiculous your arguments are. You're only just drawing more negative attention to yourself...

Tesla equivalent to an aircraft’s TOGA switch

First time seeing Waymo's visualizations, those are really slick.

It’s a grab handle, and the guy is *checks notes* grabbing it

I did in fact not say that. Maybe you confuse me with the poster you replied to. In either case, you just made a counter-claim, which is just as erroneous. Just because that is the normal behaviour in consumer cars, that does not mean it has to be the same way in these robotaxis. Logic tells us that a remapping would be a sensible thing to do, but we do not know either way.

As far as [this map](https://txdot.maps.arcgis.com/apps/dashboards/f4dd9ee9f87447d3ac3cdef192b3910f) from TXDOT reads, [it is not required.](https://pbs.twimg.com/media/GtWsf1MbMAQsIyI?format=jpg&name=medium)

Sorry, thought you were this commentor: [https://www.reddit.com/r/teslamotors/comments/1li5c3x/comment/mz9l5w2/](https://www.reddit.com/r/teslamotors/comments/1li5c3x/comment/mz9l5w2/) But no, you inserted yourself. My bad. Nothing else I said is off base -- this is all a whole bunch of "*AKSHUALLY! They are using a secret control on the handrest! **Prove they aren't!***" stupidity...

Apologies - insurance, not license.

It’s true that once in a while I do have to intervene. And no - I have not taken over 1.5 million trips - but it wouldn’t surprise me if TESLAs have taken over 1.5 million trips without intervention. Maybe even significantly more (remember - they have the data to know EXACTLY how many) BUT here’s the real kicker……. In those 1.5 million 10 year taxi trips a human makes - do they NEVER get into an accident?????? Do they NEVER get into one so bad that someone is killed??? Do the passengers NEVER have a dangerous interaction with the person driving???? We humans constantly overestimate our abilities. Here’s how I think about it. FSD has taken some major leaps forward recently - especially with HW4. The more I use it, the more I can help Tesla find those edge conditions it doesn’t handle well. To me - this is an investment in MY future. Families have to constantly make the hard decision to take driving away from their aging family members. I’m VERY hopeful and optimistic that they will have this figured out so my family NEVER has to make that decision for me. Because - I WON’T BE DRIVING! If I can play even a TINY role in that process - it’s awesome. And no amount of Elon hate should stop others from buying one of the best cars available and using FSD.

having to remind people that haven't been in a tesla before that the button opens the door and not the logical place to try and open the door (which is the emergency release) is always fun...

American drivers average one accident about every 165,000 miles. If a system disengages or requires intervention more than once every 165,000 miles it probably shouldn’t be considered as good as a human

As far as I know, over a few Mph, the button does *nothing* at speed. Click click click… no response, no action, nor notification.

If the sexy button can do custom things Tesla can.

lol I’ve owned a Tesla for 6 years and I had no idea. How did you figure this out? Did someone else tell you or did you try to open your door while driving?

Things will not always be the way they have been. That expectation is a good way to find yourself irrelevant.

To everyone saying “pressing the button to open the door disengages FSD as a normal expected behavior” probably hasn’t tried it. I just finished a FSD commute on 13.2.9 and the car doesn’t respond to the door release button at all while driving.

I just tried to use the same door release button the Safety Monitor is using while in FSD, and the car didn’t respond at all… not even a chime. I’m sure if I used the emergency release lever it would probably try to stop/pull over as indicated in the manual. I’m not brave enough to test it out quite yet hahaha

It's literally right in the documentation: [https://www.tesla.com/ownersmanual/modely/en\_us/GUID-2CB60804-9CEA-4F4B-8B04-09B991368DC5.html](https://www.tesla.com/ownersmanual/modely/en_us/GUID-2CB60804-9CEA-4F4B-8B04-09B991368DC5.html) *To disengage Full Self-Driving (Supervised) , do any of the following:* * *Press the brake pedal.* * *Press the right scroll wheel on the steering wheel.* * *Take over and steer manually.* *In addition, Full Self-Driving (Supervised) will disengage if any of the following occurs:* * *You shift out of Drive.* * ***A door or trunk is opened.***

Yes. I’m sure if a door was opened, assuming using the emergency lever, since the button doesn’t respond while driving, it would absolutely do what you’re indicating and what is published in the manual. Having that said, since the door doesn’t normally open using the button when at speed, I am simply curious and speculating if the functionality of the button changes (from disabled) for the RoboTaxi’s first public-facing software build. It’s possible for us to both be right 😊

> Pre-mapping is actually critical to safe and reliable robotaxi services. I haven't seen a single argument that holds water for this position. You're able to navigate the world without a 3D map of it in your head. You're able to drive in unfamiliar places. An AI should be able to, too. That said, Tesla has millions of high definition wide angle photos of virtually every square inch of every city and highway in the US in their training data. I feel like it should be trivial to essentially post-map by generating mapping from external camera footage of the millions of Tesla cars on the road.

Although Waymo did use safety drivers at first. Probably any company launching a new service will include safety drivers for some period of time.

sshhhh...it's the copilot's side stick controller like an airbus :)

Doubt its what OP suggest because he wouldnt need to rest his hand the whole time. My guess is they added a function in a hidden manner that he taps to assure he is awake or the button was programmed for short tap to save data for concern he spotted and long hold to open door. With software they can give it mulit function just like scroll wheel on steering

Irrelevant to teleoperation.

It's easy for you to walk around, make jokes, understand nuance, and get sarcasm. AI systems are currently struggling with any of that. AI is particularly adept at quickly processing patterns and identifying any changes in those patterns, which is why traveling a pre-mapped route could be an advantage. It knows what to look for in traffic flow, changes to road surfaces, and random events. It doesn't have to process EVERYTHING all at the same time.

It doesn't matter when it works. The problem is that the data shows that FSD has a disengagement every 400 miles, or something like that, 450, I forget the exact number, so that's once ever 2-3 days of taxi service. THOSE are the moments they're there for. It's how flawless it does over 50 hours, 500 hours, that matters, not 5.

Tesla is only up to about 450 miles.

Congrats on your hugely successful launch!!! https://finance.yahoo.com/news/tesla-robotaxi-videos-show-speeding-161403957.html

It's funny how the anti-Tesla people completely ignore this point. You have to compare yesterday to when Waymo recently launched. Guaranteed that Tesla will eliminate the supervisors (they're not driving, they're only stopping) once the data says they're fine. Does Waymo still have a control center where they can joystick the cars out of situations? I haven't kept up with them.

In addition, they took FSD trained on US roads and deployed it successfully in China. Ditto (unapproved yet) for several cities in Europe.

What does this have to do with the topic at hand? Are you just a bot?

Because then it's not strictly driverless. The human in the passenger seat can stop the car, not drive it.

I wonder what happens if you unlock the doors on the touchscreen and then try opening the door at speed. Kinda want to go try myself but I don't need to go anywhere today.

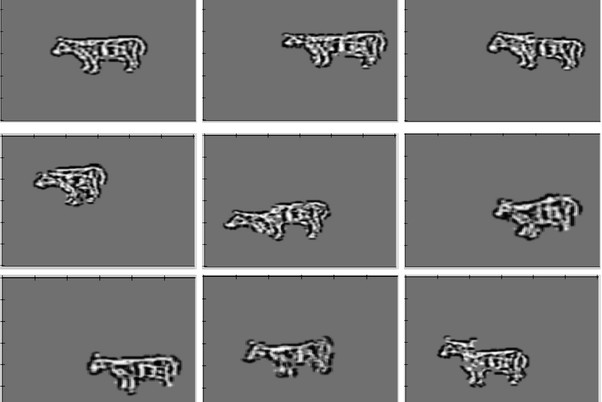

> It's easy for you to walk around, make jokes, understand nuance, and get sarcasm. Honestly the models I use on a day-to-day basis are pretty great at all of these except walking, but as I understand it there are a few AI models that are great at walking. > which is why traveling a pre-mapped route could be an advantage This statement I'm 100% on board with. The word "could" is critical here, though. Pre-mapping likely gives a big boost to reliability right now. I also recently saw a video of a Waymo stopping for a cat that ran across a street out from behind a parked car right in front of its bumper at night. My Tesla would have smoked that cat without a doubt. LIDAR is amazing technology that let that Waymo not hit that cat. But neither of these technologies, pre-mapping or LIDAR, are prerequisites for a successful autonomous taxi service. They're upgrades or improvements that give a big boost at the current level of technology, but like... Here are pictures of a cow generated by an AI model only 9 years ago: https://i.imgur.com/gVMoNCS.jpeg And here's a more recent one: https://www.freepik.com/free-ai-image/photorealistic-view-cows-grazing-nature-outdoors_152372394.htm Tesla's AI + vision solution *WORKS RIGHT NOW* as well as it needs to. It doesn't work as well as LIDAR + mapping in all cases (though it beats it in a few niche ones). But I have absolutely no doubt in my mind that Tesla's solution will continue to rapidly improve. I didn't use FSD at all until November of 2024. I thought it constantly mode-switched between shitty and annoying and downright dangerous. Then we got the Nov 2024 patch and everything changed overnight. It was a noticeable leap in performance. Mapping isn't critical. LIDAR isn't critical. AI + vision can do it now and it will do it better in the future than it does it now.

I’ll try it and let you know lol UPDATE: still nothing

If you try to open the door while FSD is active, the car will immediately start to pull over and or stop. It won’t open the door while the car is moving

The AI Models you work with *simulate* understanding jokes, nuance, and sarcasm. There is a monumental gulf between *being* and simulating. That said, when we finally allow computers to drive everywhere, they need to not just be a little better than humans. They need to be SUBSTANTIALLY better. And while Tesla's vision systems are definitely impressive, forgoing LiDAR will always mean they're inferior to systems with cameras and synthetic-based vision systems. Why not have multiple sources of input that can be cross-referenced against each other? Because, when that cat (or kid) darts out on a dark, foggy night, the Waymo will try its best to stop, while the Tesla only hits the brakes after the impact. Let me add - The current FSD is worlds better than it used to be, but it's still like driving with a 15-year-old that just got their learner's permit.

Except a 15 year old can reason. In its current form Tesla Vision lacks true reasoning. Even if it did 99% better than the current population of drivers. That 1% of failure is in the millions. Tesla Vision has a long way to go even in perfect sunny days let alone other environmental factors. Wayno has held the reigns at level 4 autonomy in the US. Time will tell if a vision only based system will meet or beat that. I am enjoying FSD in its current iteration for consumers fyi.

> And while Tesla's vision systems are definitely impressive, forgoing LiDAR will always mean they're inferior to systems with cameras and synthetic-based vision systems. Why not have multiple sources of input that can be cross-referenced against each other? A LiDAR system can only be as good as its software. A vision system with the right software can outclass a LiDAR system. A Model 3 costs somewhere around $28,000 to manufacture. A Waymo costs $60,000 to $180,000 depending on the model. That $60,000 car has fewer LiDAR sensors than previous Waymo cars to help keep costs down while also being a lower quality car than a Model 3. The actual Cybercab will likely be even less expensive to manufacture than the Model 3. It's a cost thing vs marginal benefit. If vision + AI can close the gap fast enough with LiDAR performance then the marginal benefit simply isn't worth the cost. You're speculating. I'm speculating. I get that. The reality is that FSD is working for cabs right now. > Let me add - The current FSD is worlds better than it used to be, but it's still like driving with a 15-year-old that just got their learner's permit. And it'll drive like a 17 year old next year and a 20 year old the year after that and a 28 year old professional cabbie the year after that. > There is a monumental gulf between being and simulating. Is there? When the simulation is indistinguishable from the reality, is there really a difference?

Interesting - if they can goad the police into stricting enforcing speed limits on automated taxis, while continuing the usual discretion for human-driven cars, this could significantly damage automated taxi adoption. Not specific to Tesla, of course.

I am trying to make a point, is it not accurate? Why are you calling me a bot, rude.

I can't say for sure what it does now, but I would assume at some point pressing the rear door open button while in motion would trigger warning about the car being in motion and the danger but the car may start slowing down just in case as well. Or it might simply ask on the rear screen if the passenger wants to leave the car right now.

Agreed. It's so bizarre seeing comments like this. It's a brand new to Tesla service using a different form of technology than was ever used before, extra safety just in case should be considered normal unless you're some asshole who doesn't care about safety of random humans.

Your point is completely unrelated to this post. Regardless, the Model Y has been out for 5+ years (and Model 3 for 8+ with the same emergency release mechanism) and there have been no patterns of danger from this design. How can you explain that?

FSD is shockingly good so much of the time, but Waymo is really setting the bar very high IMO. I love seeing this space move forward by two great competitors! You should check out some more of this videos Dmitri has posted recently, here are some particularly impressive examples: https://x.com/dmitri_dolgov/status/1900219562437861685?s=46 https://x.com/dmitri_dolgov/status/1900220998714351873?s=46 https://x.com/dmitri_dolgov/status/1894817664230662306?s=46 https://x.com/dmitri_dolgov/status/1803101356250849693?s=46 https://x.com/dmitri_dolgov/status/1868778679868047545?s=46

The human can't temporarily sit in the driver's seat? They're committed for life?

Or they’re all ex-Airbus drivers / s

In the video that shows the RoboTaxi making a mistake and correcting itself, the passenger monitor person has their hand on the handle, but not on the button. Thought that moment was interesting.

Yall clearly never been driven around in FSD.

re 1: No. You cut off the system when *it* makes a mistake, not when others make mistakes and the system can handle them. For example, when there's a collision imminent, you want the system to do everything it can to mitigate the effects of it. Micro-steering, selecting the best angle to hit, avoiding being kicked off a bridge, such stuff. You do not want to turn off the system and leave everything to current trajectory.

When pressed, it engages the remote driver sitting in Tesla offices

Funny how this was supposed to be a big test with no human intervention, meanwhile a safety monitor is still needed

Monitor should be in driver's seat.

"In its current form Tesla Vision lacks true reasoning." Are you implying Waymo has true reasoning?

Bro, there is nothing special or magical about the guy’s thumb on the same button you used to get out of the vehicle. It’s a way to disengage the vehicle when it senses the passenger door is open.

Mine doesn’t stop the car when I try to use the door release button… it does nothing at all. I’m sure if I used the emergency lever to open the door, it would do what the manual is describing.

Bro the button is disabled while driving in other teslas, unless you’re already stopped. While I don’t think it’s magic, it could quite possibly be programming 🤔

Does anyone know what the FSD Settings for the Robotaxi are? I see it’s in “Standard” mode vs Chill or Hurry. But what are the Offsets set at?

It could also be a direct contact button with a remote safety driver to take over. They were using these remote operators at the cybercab event, so it's not a stretch.

Potentially, it’s a mechanism to insert a marker into the log data (similar to the “report a bug” defect reporting feature available through voice control) to make it easier to analyze later.

Meh… they don’t seem to be too worried about blowing up a few hundred million dollar starships…

I agree but credit should be given where credit is due. They got past relanding falcons and now everyone is trying to copy. Elon runs some innovative companies, though he’s a doof.

I think it triggers a call to the remote operator.

> Does Waymo still have a control center where they can joystick the cars out of situations? I haven't kept up with them. As I understand they have never had joystick like controls, they can only give the car instructions or answer it's questions.

[deleted]

I have the latest public build of FSD on HW4 in my car. My answer is yes. It is a safer driver than I am, and I can't recall the last time I had a safety-critical disengagement.

Not really. Waymo is way less dependent these days on mapping than you may think. Drago talks about their mapping approach as like feeding in priors. It lets the system predict things it can’t see yet for a better/smoother ride, but nothing about they is safety critical. Of course, it also helps with getting where it’s supposed to go, which sometimes Tesla’s FSD + Nav setup still has trouble with (including in at least one of the Austin videos).

[removed]

So there’s a personnel in robot taxi? Why he just not driving?

Im gonna start driving mine like that. Fsd does scary stuff.

To stop the car, simply exit the vehicle!

Could they just make it a button?

I think it becomes a call to resolve action button while the car is in motion during a ride. It triggers a take over alert for the remote operators silently.

Smart but there is something about that button they all have their finger over it

yeah FSD ( supervised ) is completly new to Tesla we agree. Going from fake autonomy to the real thing is hard

I’m reading all these comments and I still not really sure what you all are talking about. Is there a safety inspector in the front seat guarding the open door button as one would guard the break while driving? Or are the in car cameras watching your hand movements and when they get near the door handle the car behaves differently? I can’t be the only one curious about this conversation and confused at what’s beings conversed…

Fsd also stops immediately if you if your seat belt is taken off. Super annoying when I’m having my car auto pilot so I can remove a jacket and I slip up on instinct and pop the belt off for a few seconds as I would if I was driving my self and needed that extra wiggle to remove a garment causing over heating.

Login is required to comment.

Login with Google