MarchMurky's Law of Tesla FSD Progress*

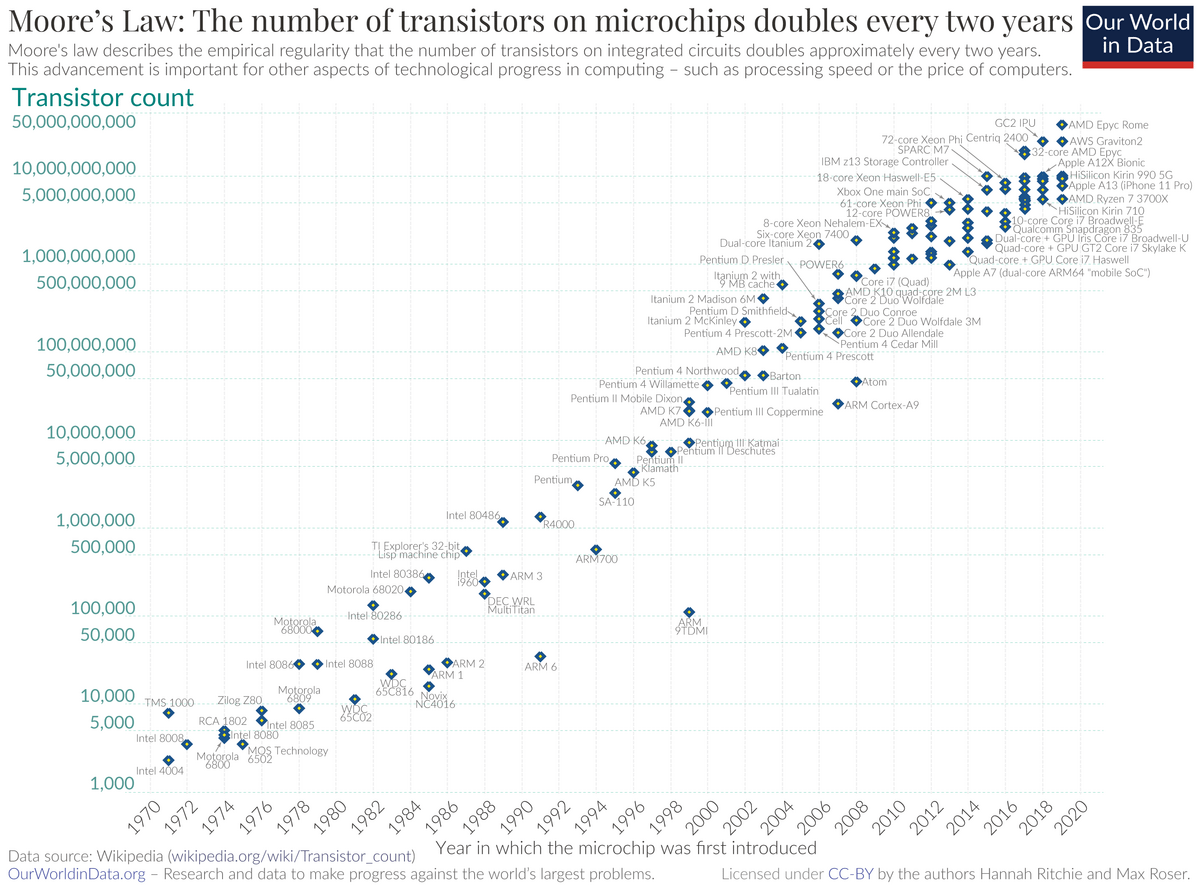

\* with apologies to Gordon Moor Here's an attempt to model the progress of FSD, based on the following from a comment I saw in r/SelfDrivingCars that I'll take at face value: "The FSD tracker (which was proven to be incredibly accurate at anticipating performance of the robotaxi) shows that 97.3% of the drives on v13 have no critical disengagements." Let's see what happens if we try assuming that development started in 2014, and that the number of critical disengagements per drive has been decreasing exponentially since then. Halving every two years seems a sensible rate to consider as it corresponds to [Moore's Law](https://en.wikipedia.org/wiki/Moore%27s_law), and this turns out to be a very good fit to the figure above. You can check this easily. If 100% of drives had critical disengagements in 2014, 50% would have in 2016, 25% in 2018, 12.5% in 2020, 6.25% in 2022, 3.125% in 2024, and in 2025 we'd expect to see about 70% of that (as .7 x .7 is approx. .5) which is about 2.2%, and 100% - 2.2% would give us 97.8% with no critical disengagements. I posit it is optimistic to model progress based on exponentially decreasing disengagements. Also suggesting development started in 2014 suggests slightly faster progress than if we used 2013 as a start date when there may have been some early work done on the Autopilot software that evolved into FSD. Finally, 97.8% being > 97.3% suggests to me that this model will give us a sensible upper bound for the rate of progress. So let's calculate [nines of reliability](https://en.wikipedia.org/wiki/Nines_(notation)) for FSD with this model. The number of drives with critical disengagements fell to < 10% in 2021 yielding **90% in 2021.** It will fall to < 1% in 2027 yielding **99% in 2027**, < 0.1% yielding **99.9% in 2034**, 0.01% yielding **99.99% in 2041**, and, similarly, **99.999% in 2047** and **99.9999% in 2054**. Note I have suggested that is an upper bound for the progress, i.e. these dates represent the earliest we might expect to see these milestones reached. The key question is, I argue, **how many nines of reliability are required** for removing one-to-one supervision to make sense? E.g. the savings in terms of salary for the chap in a robotaxi's passenger seat, likely to be in the tens, but not hundreds, of USD per drive, plus the positive PR value of truely unsupervised operation, exceeding any financial liability, and negative PR, from any incident resulting from the lack of one-to-one supervision in the case of, or inability to make, a critical disengagement, e.g. a crash. The reason I suggest this is the key question is, because, I posit it is obvious that **while one-to-one supervision is in place robotaxi cannot make a profit** as the supervisors will be paid at least as much as a taxi driver, or delivery driver in the case of trying to save money using robotaxi to deliver cars to customers.