Valuesauce

2025-07-23 12:03

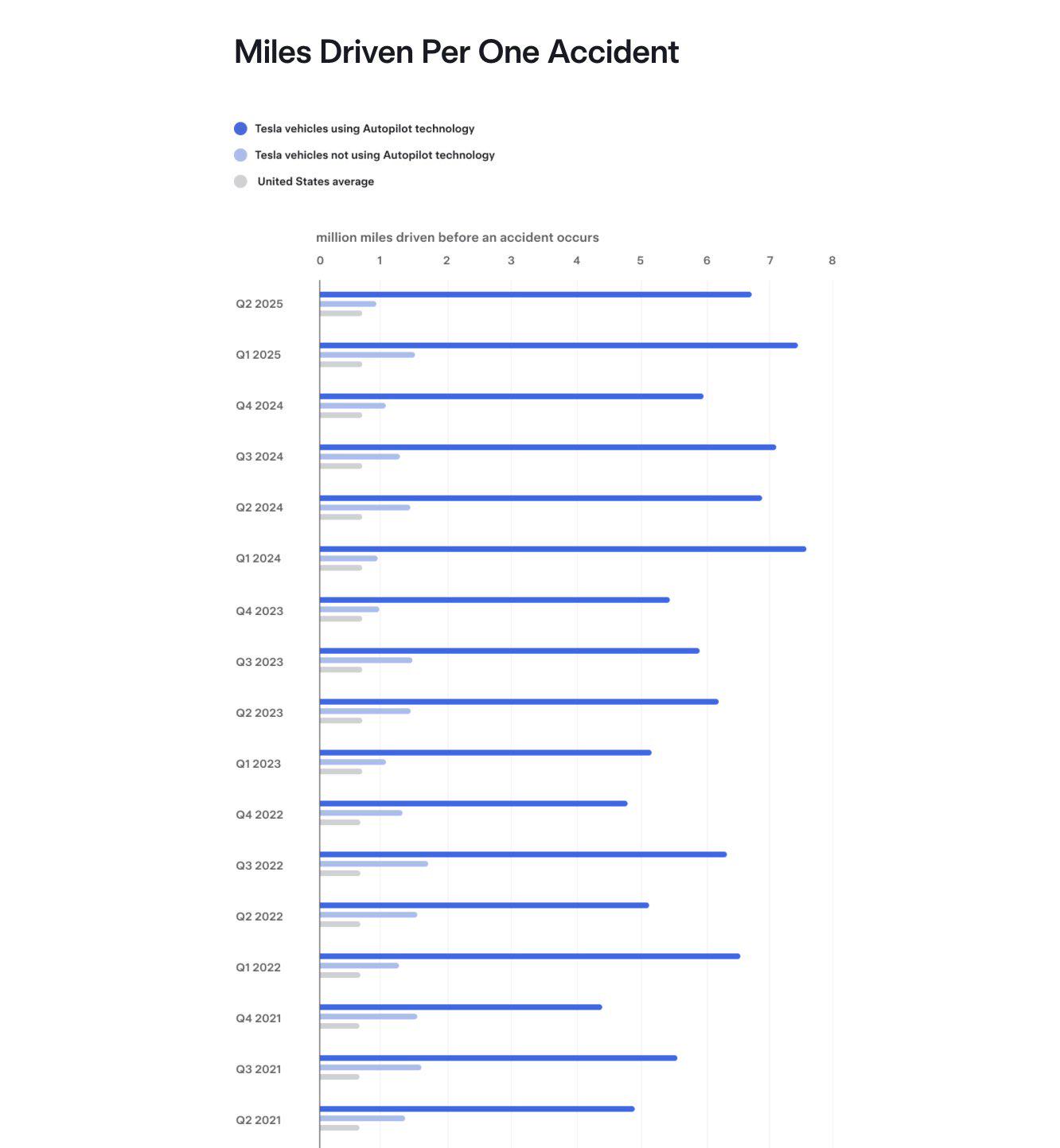

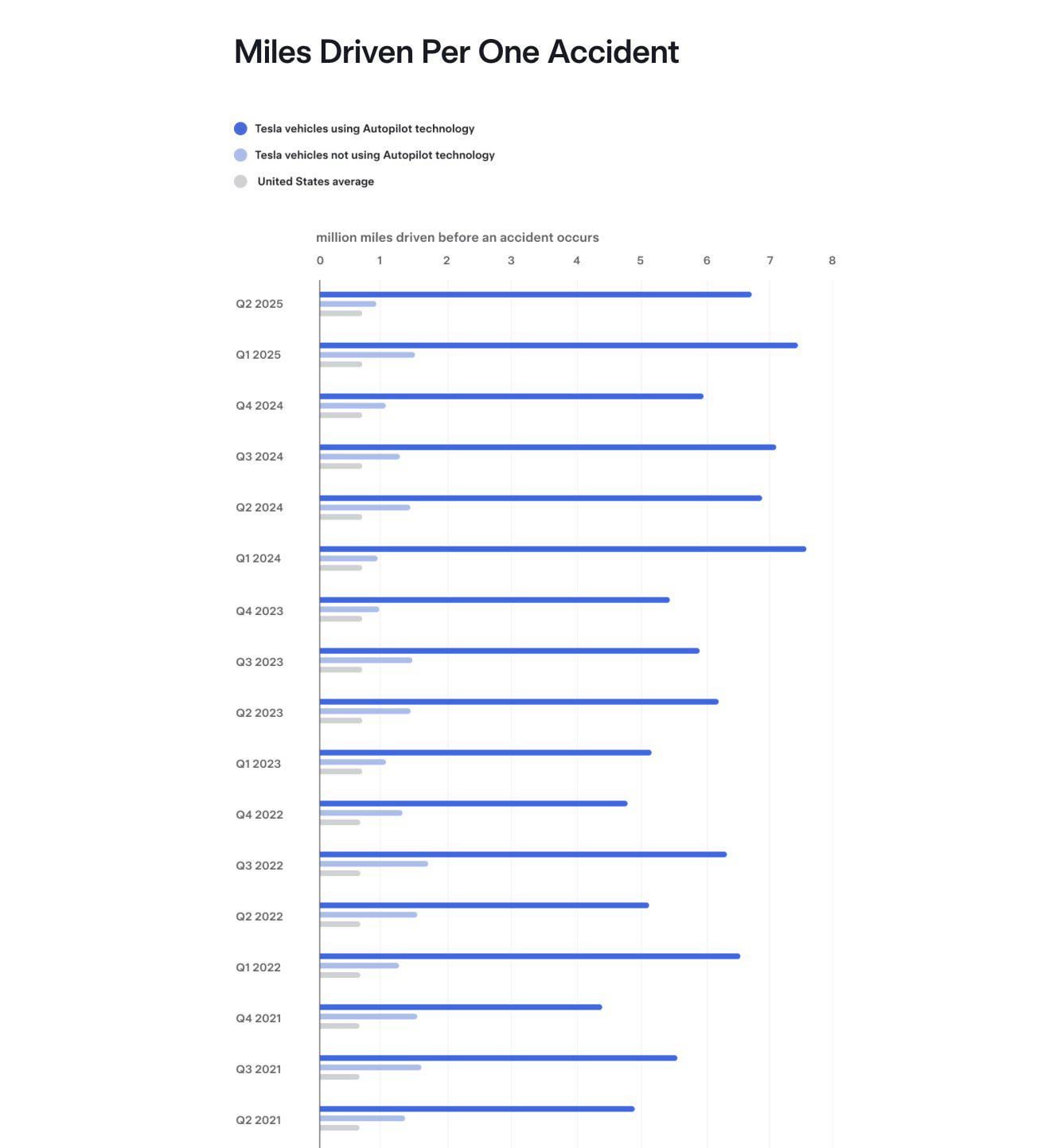

Weird data like this never makes it to every thread crying about robotaxi

Edit to further clarify: specifically the people who will make an argument that robot taxis are dangerous, while ignoring the 5 other human traffic violations posted that same day on the same thread.

grogi81

2025-07-23 12:08

Statistics like that need to be closely examined.

Some surgeons have outstanding survival rates, while others fall short. It’s easy to assume the former are more skilled, but often their success comes from avoiding high-risk patients and focusing only on straightforward cases.

Similarly, if Autopilot or FSD only takes control in ideal driving conditions while humans handle the difficult scenarios, claiming these technologies are safer can be a bit of a stretch.

woalk

2025-07-23 12:09

It’s not really meaningful for the Robotaxi, as this data does not give any indication about driver intervention.

Computer + human driver can see a lot more than either of the two can on their own.

StarFire82

2025-07-23 12:09

Quick, quick now how do you spin this negatively? Autopilot plus a lot of highway driving is why I prefer driving my Tesla almost all the time now.

kingkongbash

2025-07-23 12:11

Autopilot disengages in high risk situations. Selection bias

vwite

2025-07-23 12:12

I think because FSD still fucks up often enough, like if you actually went to sleep while on FSD you'd be in a crash in less than a couple of weeks (in a city other than Austin).

But autopilot (not FSD) is absolutely fantastic as "co-pilot", there are way too many times drivers get distracted but autopilot brakes before it's too late, the cameras for changing lanes and all visual and auditory warnings are very helpful to correct course before it's too late. Humans get distracted once in a while but the 360° cameras are always watching.

Open_Bug_4196

2025-07-23 12:15

Are the non autopilot stats only being motorways (main use of autopilot) or is counting every single road?

Meats10

2025-07-23 12:16

you need a breakdown of City vs Highway miles. Autopilot/FSD can rack up huge mileage numbers without much stress on the highway. This is most likely where people turn it on the most to reduce fatigue. On local roads, I doubt people turn it on as much because of higher degree of interventions and driver stress. There are obvious behavioral issues with its usage, and miles per accident is almost meaningless KPI.

Aromatic-Pudding-299

2025-07-23 12:16

How many human interventions did they record per mile driven with FSD (I.e hitting the break or intervening).

Reeeeeekola

2025-07-23 12:19

Autopilot turns off before impact.

ChunkyThePotato

2025-07-23 12:21

If you actually read the page, you'd know that they count any accident that occurs up to 5 seconds after Autopilot turns off as an Autopilot accident.

mitchsn

2025-07-23 12:21

Yet my Insurance rates keep going up up up

tech01x

2025-07-23 12:24

Insurance rates reflect losses from all causes including disasters, especially flooding, as well as other people hitting Tesla’s because subrogation doesn’t always work, and there’s tons of underinsurance.

juliusklaas

2025-07-23 12:26

Yeah, autopilots are used in situations where you're less likely to have a crash per driven mile. I assume the data is not corrected for this.

windblowshigh

2025-07-23 12:28

But! but, reddit says.....

juliusklaas

2025-07-23 12:28

This. If the data is not corrected for the quality of the environment, it's useless. Graph would look similar if you compare human to human in environments where autopilot works well vs where it doesn't, and is therefore used less.

JonG67x

2025-07-23 12:28

It appears worse than the same quarter a year ago, and Tesla’s not using Autopilot seems to be almost double the accident rate for Q2 2024, so does that mean Tesla drivers are getting more irresponsible or issues with the passive safety systems or something else? But to be honest, I put very little value to these figures either way.

juliusklaas

2025-07-23 12:28

Yeah the uncorrected data doesn't say much.

matt11126

2025-07-23 12:28

"We investigated ourselves and found no wrong doing"

Surely Tesla would never release condemning data, especially with the release of the Robo Taxi right ? The same way police departments are always not guilty of wrongdoings...

PsychologicalBike

2025-07-23 12:31

"Methodology:

We collect the amount of miles traveled by each vehicle with Autopilot active or in manual driving, based on available data we receive from the fleet, and do so without identifying specific vehicles to protect privacy. We also receive a crash alert anytime a crash is reported to us from the fleet, which may include data about whether Autopilot was active at the time of impact. To ensure our statistics are conservative, we count any crash in which Autopilot was deactivated within 5 seconds before impact"

wamsankas

2025-07-23 12:31

For anyone doubting this, go use autopilot or FSD yourself and you’ll clearly see it’s safer than you driving or any other drunk or distracted idiot on their cell phone?

sier0038

2025-07-23 12:32

They count any accident that occurs up to 5 seconds after Autopilot turns off as an Autopilot accident.

[deleted]

2025-07-23 12:32

[removed]

PsychologicalBike

2025-07-23 12:38

LOL, you haters are getting more desperate and pathetic. Autopilot is mostly used on the highways at 70mph, which is 31 meters per second.

So you're saying that the car is designed to know exactly when an accident is going to happen in 200 meters every time and then inform the driver by setting of an alarm, then disengage for the driver to take over? That's impressive technology don't you think?

DanielDC88

2025-07-23 12:39

It was a joke I’m sorry

resipsa73

2025-07-23 12:39

The methodology says it includes any crash that occurs within 5 seconds after a disengagement. TBF, five seconds is a long time in a high risk situation. If an accident occurred more than five seconds later it's unlikely Autopilot had a material impact on the accident.

>We collect the amount of miles traveled by each vehicle with Autopilot active or in manual driving, based on available data we receive from the fleet, and do so without identifying specific vehicles to protect privacy. We also receive a crash alert anytime a crash is reported to us from the fleet, which may include data about whether Autopilot was active at the time of impact. To ensure our statistics are conservative, we count any crash in which Autopilot was deactivated within 5 seconds before impact, and we count all crashes in which the incident alert indicated an airbag or other active restraint deployed. (Our crash statistics are not based on sample data sets or estimates.) In practice, this correlates to nearly any crash at about 12 mph (20 kph) or above, depending on the crash forces generated. We do not differentiate based on the type of crash or fault (For example, more than 35% of all Autopilot crashes occur when the Tesla vehicle is rear-ended by another vehicle). In this way, we are confident that the statistics we share unquestionably show the benefits of Autopilot.

I do think human intervention plays a part in these statistics because they don't (and really cannot) capture the instances in which a human disengages Autopilot/FSD and *avoids* a crash. Still, that the incidence rate is so far below the national average suggests a significant benefit.

PsychologicalBike

2025-07-23 12:41

"Methodology:

We collect the amount of miles traveled by each vehicle with Autopilot active or in manual driving, based on available data we receive from the fleet, and do so without identifying specific vehicles to protect privacy. We also receive a crash alert anytime a crash is reported to us from the fleet, which may include data about whether Autopilot was active at the time of impact. To ensure our statistics are conservative, we count any crash in which Autopilot was deactivated within 5 seconds before impact"

draftstone

2025-07-23 12:42

Not just that, every time it does something crazy, a human can intervene before it happens. What would be good is the amount if interventions and disengagement due to bad conditions per miles. It disengaged on me because it could not see the road anymore. It did not crash, but it was unable to continue, so it should count as "FSD would have crashed". Same for human intervention, if you have to intervene, "FSD would have crashed". FSD by design does not operate in unsafe conditions and humans can intervene and correct before crashes happen. So on a 1000 miles trip, if FSD can't drive for 200 miles because weather/backroads and in the other 800 you intervene twice, this report will show 0 crash in 800 miles driven for a perfect 100% score, while it was unable to understand the scenario for 20% of the drive and the human prevented 2 possible crashes.

cchackal

2025-07-23 12:43

But but…..I saw a video of teslas crashing into mannequins on the street! /s

PsychologicalBike

2025-07-23 12:43

Baha, no worries. But there are worse arguments made on Reddit all the time, so it's hard to tell these days :D

draftstone

2025-07-23 12:44

And in your example of the good surgeons, FSD being monitored by a human that can intervene if FSD starts doing something stupid, is as if your surgeon was monitored by another one who can tell him to stop, he will take over to fix what the first had done incorrectly and give him back the scalpel afterward. Lot easier to keep patients alive this way and get good stats if those surgeons interventions are not counted.

[deleted]

2025-07-23 12:45

Funny. It didn't turn off when it saved my whole family's life. Or on another occasion when my brother fell asleep for 4 minutes at 140kmh .

Yes, minutes.

People love to talk shit about Tesla and autopilot but I would love to know how many lives it saved.

KnubblMonster

2025-07-23 12:46

That was obviously a joke.

Sethcran

2025-07-23 12:48

Need help. Mine drives like a drunk teenager. Am I just that bad of a driver?

Sethcran

2025-07-23 12:50

What's the reason that Tesla vehicles without autopilot are so much higher than the average? That doesn't make sense to me, and until there's a good explanation, I'm going to assume that this is apples to oranges and the Tesla statistics are not measuring quite the same thing as the national average.

My assumption for now is because the Tesla numbers are highway only and the non-tesla numbers are all roads.

whiteknives

2025-07-23 12:50

Wherever Autopilot was in use.

Cool-Newspaper-1

2025-07-23 12:51

Driving on a highway is much more predictable than in a city, so this data is fully meaningless for Robotaxi.

Doza13

2025-07-23 12:52

I bet the crash was caused by another driver.

justinreddit1

2025-07-23 12:54

I use FSD daily on a 240KM roundtrip for work. It amazes me how good it is. (HW4)

originalmember

2025-07-23 12:54

I almost exclusively use autopilot on open interstate and end up driving myself in urban areas because I don’t like how it handles various situations (racing up to stopped traffic in rush hour).

It took some effort, but it’s estimated that the national interstate accident rate is roughly 1/1M miles. This would also include the interstate rush hour fender bender. Urban streets have a national accident rate of 2/1M miles.

I suspect Autopilot IS safer than a human, but not 6x… maybe 2-3x due to selection bias? Then you have issues pointed out above. For example, autopilot turns off in heavy rain and fog. Or where the highway markings are poor. All areas where humans also have significantly higher chances of getting into an accident.

CAVU1331

2025-07-23 12:54

Breaking would count as an accident. Braking would be a disengagement and not using autopilot technology.

[deleted]

2025-07-23 12:55

I'd sell my Tesla if I couldn't use autopilot, it's an incredible tool

zhenya00

2025-07-23 12:56

Uh, I actually think FSD works better on secondary roads than on the highway now.

Seriously - most of the issues that FSD still suffers from are either on the highway or in urban areas. Speed control and lane selection on the highway, speed control, lane selection, school zones, potholes, etc. in urban areas. None of these issues really exist on secondary roads.

Lowley_Worm

2025-07-23 12:58

I may be missing something, but this is just Autopilot, not FSD, correct? I don’t see any mention of FSD in the link.

zhenya00

2025-07-23 13:00

Autopilot/FSD can significantly reduce accidents even if it's not ready for fully unsupervised release. That's all this data is showing.

savedatheist

2025-07-23 13:00

There are things called audits that happen sometimes which keep companies honest.

host65

2025-07-23 13:00

So if you break last second then it is not the computers fault. Got it

Skylake1987

2025-07-23 13:02

You would expect a system that has a back-up of a human driver to monitor and ensure nothing unsafe happens to have way less crashes than an average human driver. This is not a car driving on it's own, it is software being used that is monitored and control taken away from if something unsafe should happen.

matt11126

2025-07-23 13:02

Can you please provide what company audited these claims ? I'd love to be proven wrong however I am not going to trust a trillion dollar corporation who's only goal is profit.

savedatheist

2025-07-23 13:03

They are generally newer cars than average and they have active safety features that operate even when autopilot is not in use.

erichf3893

2025-07-23 13:04

Nah it would be your fault for breaking something

savedatheist

2025-07-23 13:05

And snow. I doubt many people use AP in snow.

Clayskii0981

2025-07-23 13:06

I think there are some reasons for this besides "FSD good".

Like 17% of Tesla drivers use FSD (quick search shows around 1 million out of 6 million). And they really only use it for basic highway use, the city driving is more niche and still being worked on.

So in comparison, it's a small sample size using it on basic highway routes. Which it is very good at. Occasional city street use. On the other side, it's compared with much more people driving everywhere. And the vast majority of accidents occur in city driving/lower speeds.

savedatheist

2025-07-23 13:07

That’s a question for government regulators.

mybotanyaccount

2025-07-23 13:09

Are these part of the exaggerations we've heard about9

Imightbenormal

2025-07-23 13:15

What about the rumour where auto pilot disengages just before the crash, and it is then logged completely as a human fault?

I am not trying to offend. The numbers could be a bit lower than what is actually happening.

I am all for self driving. But we need a standard for some passive sensor technology that can be placed under the road.

So it can work in any weather. Where I live, I have to drive quite some time to get a road that is up to standard where self driving would work.

I can wait for the technology to get so good so I can get a completely self independently driving car so I can relax.

But I would never afford it. So I have to wait for self driving subscription taxis.

steinah6

2025-07-23 13:16

I’d be in an accident every day. It completely ignores a busy stop sign on my commute. It slows down a little but doesn’t stop, and other drivers are aggressive. I disengage and report every day.

draftstone

2025-07-23 13:17

This data does not show anything, we need human intervention per mile to at least get an idea. Out of those 6.6 million miles average, if there is 1 or 1000 human interventions, it changes the data a lot. Adaptive cruise control + lane assist on many cars from other brands have perfect record on highways in good weather, does it mean it is as good as FSD on highways?

This data is explicitely formatted to make it look good. It might be as good as the data shows, but it is totally incomplete to come to any conclusion.

vwite

2025-07-23 13:18

Yeah there is a weird area by my home that I also need to intervene everyday because instead of following the curve it tries to almost turns right but starts going towards the curb

pw154

2025-07-23 13:21

> So if you break last second then it is not the computers fault. Got it

If an accident happens within 5 seconds of disengaging Autopilot/FSD Tesla still counts it as an FSD/Autopilot accident

zirconst

2025-07-23 13:26

Like the ones Elon personally helped defang, fire, and defund?

pw154

2025-07-23 13:27

> Similarly, if Autopilot or FSD only takes control in ideal driving conditions while humans handle the difficult scenarios, claiming these technologies are safer can be a bit of a stretch.

Overall I have no doubt FSD in its latest iteration (v13 on HW4) is safer than the average human driver. Considering the computer doesn't get tired, doesn't text and drive, can see 360 degrees around it at all times, etc. Combined with a vigilant human driver that can take over when needed it's absolutely safer.

Niwi_

2025-07-23 13:28

Which is supposed to only be used on motorways and less so in the city. Big bias here but I think its still obvious that all the features do in fact make the car more safe than the average car. Whatever that means

ewzetf

2025-07-23 13:29

did lex fridman make these charts

pw154

2025-07-23 13:29

> Need help. Mine drives like a drunk teenager. Am I just that bad of a driver?

FSD v12 drove ilke a teenager, FSD v13 in Standard mode drives like a seasoned human driver for me.

Niwi_

2025-07-23 13:30

So if I break it would be in this statistic as an "accident"? Idk.. do you have a source for that?

[deleted]

2025-07-23 13:31

Rubbish

[deleted]

2025-07-23 13:32

Rubbish. Read the data

__saves

2025-07-23 13:40

You mean half the accident rate. The bigger the bar the better.

pw154

2025-07-23 13:41

> Yeah, autopilots are used in situations where you're less likely to have a crash per driven mile. I assume the data is not corrected for this.

FSD doesn’t get tired, distracted, drunk, or texts while driving. It can see 360 degrees at all times and reacts faster than a human ever could. Those are major advantages that humans don’t have, even in ideal conditions. So while it might be used mostly in lower risk conditions it still shows that in those conditions it outperforms humans. That alone is valuable and doesn't invalidate the data.

An anecdotal example: I know someone that was using FSD in a Model 3 on the highway, middle lane, when a vehicle in the right swerved directly into its path to avoid an obstacle in their lane. FSD braked hard but still rear-ended the vehicle. Model 3 was written off. FSD fail, right? On reviewing the dash cam footage post accident it was discovered that a Ford F250 was in the left lane in the Model 3's blind spot. The driver of the Model 3 said that if he were driving manually he would have 100% instinctively swerved left to avoid the vehicle swerving into his lane, and would have been involved in an even more serious accident by side swiping the F250. FSD avoided the more dangerous scenario by choosing to brake and rear end the vehicle that swerved into its path instead.

__saves

2025-07-23 13:42

Use my FSD everywhere including urban driving in big cities, its fantastic (HW4)

photog72

2025-07-23 13:43

I use EAP on freeways and surface streets. I can confirm, no wrecks with its use (in my experience).

fidju

2025-07-23 13:46

https://www.reddit.com/r/teslamotors/s/3bWfHYdTun

twoeyII

2025-07-23 13:47

I think this is relevant because I disengage when there’s large debris in the road or other activities that are high risk that I’ve noticed my car historically isn’t good about. Since I’ve had FSD since 2019 it’s ingrained in my mind to take over in some settings that it might be fine with now. I’m certain I disengage too often so it’s not necessarily a strike against FSD but it’s a reality that I don’t fully trust it. I wonder how many of us are disengaging for the high risk parts. Overall, I feel safer having all these features available if I’m paying attention though.

liberte49

2025-07-23 13:48

Is it not correct that 'Autopilot' as the term is used in a Tesla is not FSD? So is this table the statistics for FSD? Autopilot, as i understand the term for my car, as 3 components: the camera-aided lane-following, distance monitoring and adjusting, speed handling. It will not, for example, recognize a red light or a stop sign, among other limitations. Is the low accident stat table for that? or does this really mean FSD.

MushroomSaute

2025-07-23 13:51

Yeah, this is my question too - braking is definitely a full disengagement obviously, but I highly doubt they're including it in their "accident" stats.

Herf77

2025-07-23 13:51

It's sarcasm, brake vs break

[deleted]

2025-07-23 13:52

[deleted]

MushroomSaute

2025-07-23 13:53

I feel like interventions are unfortunately less useful than we'd like - I intervene for many reasons, and rarely for reasons that would preclude "robotaxi" capability. Usually just convenience things like waiting a little too long (being too safe) for an opening, making a full stop at signs, etc.

MushroomSaute

2025-07-23 13:55

Hardly obvious - I've seen crazier arguments from legitimate FSD doomers, and plenty expect Tesla to take every possible step to pad their data (which I wouldn't put past them honestly)

Niwi_

2025-07-23 13:57

Oh shit that completely didnt click in my head and I even adopted the wrong spelling wow

Niwi_

2025-07-23 13:57

r/wooosh xD

pickle787

2025-07-23 13:57

Sounds safe

MushroomSaute

2025-07-23 13:58

To be fair, I think it's the only thing Tesla *has* on other cars. You can get more range, better build quality, cheaper, ~~less terrible CEOs~~, etc., depending on your priorities - but the one thing keeping me on my Model 3 is FSD.

SRMax666

2025-07-23 14:00

It’s interesting to note that Tesla drivers as a whole are safer drivers.

grogi81

2025-07-23 14:14

If someone chooses to manually disengage FSD, it must have been a serious issue. I find it risky enough, and let's be generous, assume only 10% of those cases would result in an accident.

So, unless the average distance between manual disengagements exceeds 70,200 miles, I don't believe FSD/AutoPilot technology is actually safer.

Valuesauce

2025-07-23 14:16

So fsd performs just under 10x better than humans on the same easier test. It’s not the same as robotaxi but an order of magnitude improvement in the “simple” situation bodes well for some sort of improvement in the non-simple situations compared to a human.

[deleted]

2025-07-23 14:16

Meaningless stat - I disengage FSD like 8 times in a 60 mile drive basically anywhere I know it’s tricky and it won’t handle it good. So they are counting all those easy freeway miles as FSD miles without crashes (even AP could handle those miles).

UFO64

2025-07-23 14:23

Has there been independent verification of this? Given the number of claims where Tesla will say autopilot software was not in while the driver claims it was, I would really love to see a proper audit.

Tesla has a VERY strong financial incentive for this data to be in their favor. Any company with that conflict of interest should not be trusted to publish their own safety data and have it be simply accepted at face value. Take a look at Boeing for why we never trust a corporation in isolation.

That all being said? FSD has gotten to the point where it's rare I have to jump in these days. Happens maybe once a week it feels like? They are clearly making headway which is great to see. Still would be cool to have good data sources to backup my anecdotal experience.

lelandbay

2025-07-23 14:25

This joke flew over everyone's head...

jaredthegeek

2025-07-23 14:26

Sure, believe this when they won’t actually release the raw data for review.

Heavy_Doody

2025-07-23 14:32

I'm actually super impressed by the non-Tesla number. I thought they crashed for more often than every 700K miles.

pw154

2025-07-23 14:33

> Surely Tesla would never release condemning data, especially with the release of the Robo Taxi right ?

You're right to be skeptical of any self reported data, but considering that compared to a human driver FSD doesn't get tired, text and drive, and can see 360 degrees at all times, combined with the supervision of a human driver is it really a reach that the data presented is more or less accurate?

sermer48

2025-07-23 14:35

Any accident that happens within 5 seconds of autopilot/FSD being engaged are included. Those rumors are false.

robo45h

2025-07-23 14:36

It's not clear. They specifically say "Autopilot technology" rather than "Autopilot." So, this would include FSD, which is valid for street use.

pw154

2025-07-23 14:36

> So, unless the average distance between manual disengagements exceeds 70,200 miles, I don't believe FSD/AutoPilot technology is actually safer.

You're entitled to that opinion, but I use FSD almost daily and can attest that with me supervising it is a safer driver than me alone. I wrote this as a reply to another post in this thread but here's an example:

I know someone that was using FSD in a Model 3 on the highway, middle lane, when a vehicle in the right swerved directly into its path to avoid an obstacle in their lane. FSD braked hard but still rear-ended the vehicle. Model 3 was written off. FSD fail, right? On reviewing the dash cam footage post accident it was discovered that a Ford F250 was in the left lane in the Model 3's blind spot. The driver of the Model 3 said that if he were driving manually he would have 100% instinctively swerved left to avoid the vehicle swerving into his lane, and would have been involved in an even more serious accident by side swiping the F250. FSD avoided the more dangerous scenario by choosing to brake and rear end the vehicle that swerved into its path instead.

Heavy_Doody

2025-07-23 14:38

I would also like to know is how often human intervention turns out to be the wrong decision.

The car can see in multiple directions at the same time, so it stands to reason that it would make better collision avoidance decisions than I could. Maybe not every time, but often.

I've always assumed that the first time I'm put in a situation where the car is saving the day, I'm going to freak out and slam on the brakes or grab the wheel, and prevent the car from avoiding the accident.

Niwi_

2025-07-23 14:38

Oh is it? My knowlege about US law around it might be outdated

NoHonorHokaido

2025-07-23 14:39

Conveniently autopilot disengages a second before the crash.

robo45h

2025-07-23 14:40

Why assume they are highway only? Because Autopilot is mentioned? Except the term used is "Autopilot technology" -- that includes FSD I think, which can be used non-highway.

robo45h

2025-07-23 14:41

It's not clear. They say "Autopilot technology."

pixelbart

2025-07-23 14:44

Whenever traffic gets tricky, I instinctively disengage AP and take over the wheel. That’s when 90+% of all accidents happen.

steelmanfallacy

2025-07-23 14:47

"Tesla vehicles using autopilot technology" means that AP was in active use?

There is probably some selection bias in that users only activate AP on the freeway or other stretches of road that are relatively reduced risk.

matt11126

2025-07-23 14:49

Sure, on paper it sounds great. Then again, do you want to believe Tesla ? They have a great track record, after all the Roadster released in 2020, right ? Oh... Right they have a track record of lying to their customer base. I'm sure in this instance they wouldn't lie though right ?

You know that FSD turns off whenever the car detects a collision as it's overriden by the hard coded collision avoidance right ?

Cool-Newspaper-1

2025-07-23 14:54

I may be interpreting the data wrong, but to me it looks like they’re comparing total distance driven on either system. As AP is used mostly on highways whereas human drivers also ride in cities, the data is not comparable and doesn’t say a thing about Autopilot’s performance in cities.

miataowner

2025-07-23 14:55

Human interventions per mile also "doesn't show anything" per your own standard, as human interventions can be for literally any reason at all.

I turn FSD on just to let the car drive in a straight line for a while, like a smart cruise control, and then "intervene" when I want to turn or pull into my stop. No, I don't disable FSD to downgrade it to AutoPilot or whatever because it's cumbersome and I have to park the car to change my mind and the driving mode. As such, with FSD enabled, I probably "intevene" half a dozen times each day I drive the wife's Model Y.

I've also intervened when I decided I wanted to stop somewhere else along a planned route, like last week FSD was driving me into downtown Memphis, and I decided to stop at a gas station to pick up a beverage. Nothing was wrong, I just wanted a bigass coffee and there's reasonably decent coffee at the Shell station along my route.

No, interventions per mile doesn't show us anything either, by your method of analysis.

djao

2025-07-23 14:59

Counterpoint, if you went to sleep without FSD you'd crash almost instantly. FSD is still better than no FSD.

pw154

2025-07-23 15:00

> You know that FSD turns off whenever the car detects a collision as it's overriden by the hard coded collision avoidance right ?

If a collision happens within 5 seconds of FSD being disengaged they still count it as an FSD collision.

I use FSD almost daily and can attest that with me supervising it is a safer driver than me alone. I wrote this as a reply to another post in this thread but here's an example:

I know someone that was using FSD in a Model 3 on the highway, middle lane, when a vehicle in the right swerved directly into its path to avoid an obstacle in their lane. FSD braked hard but still rear-ended the vehicle. Model 3 was written off. FSD fail, right? On reviewing the dash cam footage post accident it was discovered that a Ford F250 was in the left lane in the Model 3's blind spot. The driver of the Model 3 said that if he were driving manually he would have 100% instinctively swerved left to avoid the vehicle swerving into his lane, and would have been involved in an even more serious accident by side swiping the F250. FSD avoided the more dangerous scenario by choosing to brake and rear end the vehicle that swerved into its path instead.

evcz

2025-07-23 15:04

does accident definition includes curb rush while it was autoparking?

Selkis

2025-07-23 15:09

The trick is to disable autopilot right before any immenent crash will occur.

juliusklaas

2025-07-23 15:18

Thats exactly the fallacy here: this data does not show that it outperforms humans in certain conditions. It shows that it under ideal conditions, it outperforms human drivers under average conditions.

I won't go into the anecdotal evidence because it's just that.

greyscales

2025-07-23 15:18

Doesn't look like it, seems to be just every single road.

RealWorldJunkie

2025-07-23 15:19

You’d have to take that with a pinch of salt. When I’m using AP, I disengage it by tapping the break. Not because I needed to intervene, just because i wanted to turn off AP at that time and i fine tapping the break it gently it doesn’t actually engage the breaks to be the simplest way to do that.

People doing that will skew the numbers here if it was recording that

TaifmuRed

2025-07-23 15:19

World leading self driving? Lies, damn lies and statistics. This is only when autopilot is in use and it's not even fsd

Overall_Curve6725

2025-07-23 15:24

The numbers don’t change with human intervention. The point is the lack of accidents

MexicanSniperXI

2025-07-23 15:25

And that one crash is what made the news and people on r/electricvehicles ate it up like grandma’s pancakes.

pw154

2025-07-23 15:25

> Thats exactly the fallacy here: this data does not show that it outperforms humans in certain conditions. It shows that it under ideal conditions, it outperforms human drivers under average conditions. I won't go into the anecdotal evidence because it's just that.

There's still value in that, no? As long as its outperforming a human (even if only in ideal conditions) it's still better than not using it at all.

greyscales

2025-07-23 15:27

Maybe, maybe not.

The methodology of what Tesla counts as a crash (only if airbags deployed) and what the US average based on NHTSA data is (anything with property damage or injury) is not comparable.

speel

2025-07-23 15:30

I think breaking + break force + break time would be a good measurement.

Thisisaninues

2025-07-23 15:33

Jesus Christ that's an insane commute

Here_There_B_Dragons

2025-07-23 15:34

Did that matter? This is comparing fsd with occasional interventions vs manual driving (interventions 100% of the time in a sense) and the former is much less prone to accidents than the latter. This isn't comparing robotaxi-like service (no human involved) to fsd with interventions.

PotatoesAndChill

2025-07-23 15:45

That's like an hour and a half if you don't have much traffic. I've seen way, way worse.

Walkable distance is nicer though.

LiquidTide

2025-07-23 15:47

Yeah, for me it is picking the less crowded lane at stoplights. 95+ percent of my interventions are voluntary.

MacaroonDependent113

2025-07-23 15:48

Notice the substantial improvement in 2024 when Tesla changed to the neural network end-to-end processing. Comports with the improvement I saw in the car.

kotsumu

2025-07-23 15:48

I wanna know the stat of when a human is behind a wheel as well

Sethcran

2025-07-23 15:48

Only because I'm seeking a reasonable explanation for why a Tesla without any form of automation at all gets in half as many accidents as an average car.

Maybe as another user said it's purely automatic braking type safety features, but I have a very hard time believing that.

DyCeLL

2025-07-23 15:48

The verification are the government institutions. All accidents are recorded and investigated, if there would be indications that the software is at fault, the institutions are required by law to take action. That’s why much of ‘the news’ you read is bullshit. They don’t wait for the official investigations and draw conclusions without actual prove.

You could argue that the US government is lying but all governments around the world? Don’t think so.

Smartnership

2025-07-23 15:56

> Quick, quick now how do you spin this negatively?

“ER staff and body shops negatively affected”

Impossible-Dig4677

2025-07-23 16:03

Or the autopilot switching off just prior to a crash.

M1A1Death

2025-07-23 16:04

My commute is about 120miles (193km) per day and FSD makes it a breeze. I only do it because I get free charging at work that covers all my travels. Just gotta pay for tires every year.

Plus I get paid well so I’ll do it for a few years

hahnsoloii

2025-07-23 16:13

AND Tesla are in less accidents even when manually controlled:

For drivers who were not using Autopilot technology, we recorded one crash for every 963,000 miles driven. By comparison, the most recent data available from NHTSA and FHWA (from 2023) shows that in the United States there was an automobile crash approximately every 702,000 miles.

[deleted]

2025-07-23 16:14

Is this adjusted for the fact Autopilot refuses to operate in difficult conditions which are more likely to result in accidents? Snow storms, heavy rain, poor road markings, driving directly into the sun.

shumpitostick

2025-07-23 16:17

I don't think this is a fair comparison. Many drivers disengage FSD if they find themselves in a dangerous situation, and the accidents following that would not be counted against FSD in this methodology, even if it led you to the dangerous situation.

About Autopilot - that is specifically meant for boring long highway drives with no lane changes, of course it's safe.

mmMOUF

2025-07-23 16:24

Yep, not using FSD the entire time for point a to point b and not using it around pedestrians, parking lots, in construction zones and other highly variable things that makes this comparisons of data not really of much value to me - FSD is great though for simple driving situations

Starkzillaa

2025-07-23 16:33

I've used FSD nearly exclusively in southern california - from driveway to parking lot, and autopark - for over a year now on HW3 and theres been less than 5 times that I questioned what it was doing outside of making an odd lane change. All while it has gotten out of the way of a potential collision that I didn't see coming several times. This data just reaffirms my thoughts that it is a better driver than most humans

goRockets

2025-07-23 16:42

Something else to keep in mind is the population of cars in each data set. The average vehicle is much older and in worse condition than the average Tesla. All Teslas have AEB and most likely cars with brakes and tires in much better condition.

So it would be interesting Tesla's crash rate to more similar vehicles in price and age if the goal is to compare the safety of AP vs no AP.

dudeman_chino

2025-07-23 16:43

Tesla accounts for this by counting crashes that happen within a few seconds of disabling AP as AP crashes.

>Methodology:

We collect the amount of miles traveled by each vehicle with Autopilot active or in manual driving, based on available data we receive from the fleet, and do so without identifying specific vehicles to protect privacy. We also receive a crash alert anytime a crash is reported to us from the fleet, which may include data about whether Autopilot was active at the time of impact. To ensure our statistics are conservative, we count any crash in which Autopilot was deactivated within 5 seconds before impact, and we count all crashes in which the incident alert indicated an airbag or other active restraint deployed. (Our crash statistics are not based on sample data sets or estimates.) In practice, this correlates to nearly any crash at about 12 mph (20 kph) or above, depending on the crash forces generated. We do not differentiate based on the type of crash or fault (For example, more than 35% of all Autopilot crashes occur when the Tesla vehicle is rear-ended by another vehicle). In this way, we are confident that the statistics we share unquestionably show the benefits of Autopilot.

[Source](tesla.com/VehicleSafetyReport)

specter491

2025-07-23 16:48

This data can be easily skewed. You could argue that autopilot has a low probability of crashing because the human driver takes over before the crash occurs. And AP very literally forces you to keep your eyes on the road so of course you're going to have less crashes. But it boils down to are the crashes less likely because of AP doing the driving or because AP forces you to watch the road. I could probably design a car system that forces you to keep your eyes on the road like FSD, has zero autonomous or driving capabilities at all, and end up with similar low accident numbers.

Disclaimer: I own FSD but I'm also skeptical of data on this subject

CarCooler

2025-07-23 16:49

The number 6.69 will be proudly and loudly presented by Elon Musk on today's earnings call.

gorgeousphatseal

2025-07-23 16:56

A crash every 702k miles. Bby come to Florida.

bjohnson838

2025-07-23 16:58

That’s awesome especially compared to the average 700,000 miles when humans are driving. Picking up my new model Y today. Can’t wait to try out FSD with HW4!

justinreddit1

2025-07-23 17:13

Yeah it’s definitely the furthest I have done for work but FSD truly makes it easier. I feel so well rested when I get to my destination in comparison to an ICE vehicle I would arrive agitated or stressed. World of a difference.

justinreddit1

2025-07-23 17:15

Same scenario as me. Free charging at work. It was a no brainer for a long commute to utilize and pay for FSD

DeltaBlast

2025-07-23 17:21

Us from toilet duck..

[deleted]

2025-07-23 17:33

[deleted]

Koupers

2025-07-23 17:43

That's impressive. I don't even use autopilot atm because it can't handle Utah roads. It constantly gets in and out of the HOV lane, it makes random lane changes because of upcoming exits but ends up cutting cars off, and then it also just chooses the wrong lanes for exits.

Southern-Stay704

2025-07-23 18:06

My experience is 100% the opposite. I drove 800 miles on FSD when I had a trial of it on my Model X. I did a road trip of 400 miles distance and back. The longest period of time I was able to leave FSD engaged before I had to take control was no more than 4 minutes. By that point it was doing something stupid enough that I had to stop it.

I have owned 4 Tesla vehicles since 2015. All of them have had some form of AP, from AP1 to AP2.5 with NOA, to AP3, to FSD. Every last one of them has sucked. As I've driven these cars for the last 10 years, I've used AP less and less and less, to the point where I hardly ever engage it anymore. On the 800 mile road trip, FSD almost killed me and my wife twice, and I stopped it by intervening at the last second.

Anyone who thinks this software/hardware is actually going to work acceptably here soon -- you're delusional. It's nowhere near ready. Not even close. Not even a little bit.

DevinOlsen

2025-07-23 18:15

I hate that this comment gets posted each time.

Take the time the read and you'll see Tesla counts any accidents that happen within 5 seconds of AP being disabled as an accident 'against' AP/FSD.

If you cannot react to something within 5 seconds that is YOUR fault, not the car.

Quin1617

2025-07-23 18:17

> That’s why much of ‘the news’ you read is bullshit. They don’t wait for the official investigations and draw conclusions without actual prove.

And beyond Tesla, news orgs do this for just about everything they report on.

Which is why it’s never a good idea to give initial reports and articles any value.

piense

2025-07-23 18:19

Having a good amount of acceleration on hand also makes it easier to maneuver out of potentially iffy situations. Just super easy to pass or get around a car that’s looking less than predictable to be around.

Kandiak

2025-07-23 18:19

Could this be because a person disengaged autopilot to try to avoid the crash and therefore it wasn’t “on” during the miles driven?

Pliskin01

2025-07-23 18:23

Are you using autopilot, enhanced autopilot, or FSD? Autopilot shouldn’t change lanes for you. I vastly prefer basic autopilot for highway driving over FSD. FSD is nice for unfamiliar locations where I want the car to just take me to the next supercharger.

Quin1617

2025-07-23 18:29

> Which is supposed to only be used on motorways and less so in the city.

That distinction is long gone imo.

Traffic Light and Stop Sign Control doesn’t make sense as a feature if AP is a freeway only system.

Niwi_

2025-07-23 18:34

The Golf is obviously true full self driving. I would just assume it makes mist of its miles on highways by far.

Quin1617

2025-07-23 18:34

Just because someone brakes doesn’t mean they got into an accident.

StickFigureFan

2025-07-23 18:45

What I want to know is how many accidents happened within 30 seconds of self driving disengaging

JonG67x

2025-07-23 18:56

Nope - double - the meaningful quarter comparison is Q2 to Q2 as that removes weather, daylight, etc variables, and both are worse than they were in Q2 2024

Scifihistory

2025-07-23 19:01

The problem with these metrics is autopilot often disconnects seconds before a crash.

Are those crashes included? Should they be?

I don’t trust this data at all.

As others have said, autopilot can only be engaged in relatively predictable environments. This data reeks of survivorship bias.

If most crashes occur close to home, and autopilot is rarely engaged close to home, is this data meaningful?

gmatocha

2025-07-23 20:37

Autopilot has a habit of disengaging right before a crash.

FlugMe

2025-07-23 20:39

Is this because right before a crash people have an oh shit moment and disengage autopilot?

This data doesn't really say anything without a lot of context and nuance.

I use auto pilot daily.

EDIT: they count auto pilot if it was active 5 seconds before impact, which imo is pretty good.

shaneucf

2025-07-23 20:41

The title of this post should be "Tesla with autopilot has 10 times less crashes than national avg"

Throwing out a number without any comparison or context is useless

shaneucf

2025-07-23 20:44

It's only 10 times less crashes. Not 100 times.

You can say "Tesla autopilot failed to achieve the safety rate Elon claims"

shaneucf

2025-07-23 20:46

No, you cannot at the same price. Sure you can get better stuff if you want to pay more. but try to find one at the same price but more range, space, performance, build quality, tech, etc. and not Chinese EV.

shaneucf

2025-07-23 20:48

A lot of brands have things way better than autopilot. Try Hyundai, VW and Ford. Their version doesn't have phantom braking, doesn't freak out when you control it, the following is better and speed up like normal human (autopilot is very very slow on speeding back up after slowing down)

VirtualLife76

2025-07-23 21:05

That's the part that makes me question the data overall. I highly doubt the car driven makes what looks like 100% difference in accidents.

123DCP

2025-07-23 21:31

It's a huge bias and Tesla has refused to share the data for cars driven on similar roadways without the use of Autopilot. Tesla has the data for a legitimate comparison but doesn't want to share it and instead shares data that looks favorable if you ignore that they're comparing apples and oranges.

One can draw an inference about what the dataTesla won't share shows about whether Autopilot is as safe as a human driver on similar roads.

(Hint: The logical inference is that Autopilot is not as safe as a human driver with no use of Autopilot under similar circumstances.)

Cold_Captain696

2025-07-23 21:36

The data released by Tesla can’t be compared to the NHTSA data - They measure different things. Firstly the inclusion of Autopilot in the data means it is likely skewed towards freeway miles, which are statistically safer. Secondly, Tesla’s definition of a crash is much more restrictive than the NHTSA definition.

It’s a shame Tesla has chosen to only release the data they have, because it’s likely that they could provide something that would be comparable to the NHTSA data if they wanted to, given the amount of data they collect.

123DCP

2025-07-23 21:49

No. It's comparing accident rates of Tesla vehicles while their drivers are using any form of Autopilot, in those circumstances in which drivers feel safe using Autopilot with accident rates of Tesla vehicles when the drivers are not using Autopilot, including in situations in which they wouldn't feel safe using Autopilot, and with the overall accident rate of all vehicles in the US, regardless of the maker or the use of any driver assistance system.

People are more likely to use all forms of Autopilot on (relatively safe) divided highways and are less likely to use them on (relatively dangerous) city streets. Tesla could give us accident rates with and without Autopilot under similar circumstances, but they've refused to, instead only releasing data based to show lower accident rates for Autopilot.

It seems likely they wouldn't hide the data allowing an apples-to-apples comparison if that data showed Autopilot providing a significant safety advantage.

southy_0

2025-07-23 21:54

There may absolutely be scenarios where FSD is consistently worse than a human.

But the average number is so staggeringly better for FSD that it’s at this point a mute discussion.

If you don’t trust Teslas „human driving number“ then take the average of all vehicles, it’ll tell the same story.

Again: not saying that there can’t be cases where FSD underperforms, but at this point in time they are just statistically irrelevant.

bit_errror

2025-07-23 22:03

You can just hit the accelerator pedal, to push it through the stop sign, or making a turn, without it disengaging.

SRMax666

2025-07-23 22:43

Well, the thought processes that buyers of electric vehicles go through as can be readily seen on this and other Reddit’s show more thoughtful behavior and concern for safety and the changes that they will make being an owner. This could lead to safer drivers due to the heightened awareness.

ChunkyThePotato

2025-07-23 22:53

This is incorrect. They released the accident rate for FSD back when it was only enabled on non-highway roads, and it was still better than the human average, despite the human average obviously including a lot of highway miles.

ChunkyThePotato

2025-07-23 22:56

Yup. This is useful for shutting down the bozos who claim FSD is too unsafe to be used on public roads. It's not useful for anything regarding Robotaxi.

But considering there have been literally zero injuries involved with the Robotaxi service since it launched, they don't have anything legitimate to complain about there either.

ChunkyThePotato

2025-07-23 22:58

Are you kidding? You don't think cars driven by largely somewhat affluent people have significantly lower accident rates than the masses? And obviously part of it is that only airbag accidents are counted. But that makes it a fair comparison between the Autopilot airbag accident rate and the non-Autopilot Tesla airbag accident rate.

Here_There_B_Dragons

2025-07-23 22:59

Yes I agree it would be nice to see the accident rates for non fsd but would that still reflect a comparison to a non Tesla car? What about demographic of driver (wealth/age differences) and breakdowns by type of road and type of hw (3 vs 4)

ChunkyThePotato

2025-07-23 23:01

They likely do, but stuff like parking lot fender benders often aren't reported and therefore aren't counted. I would guess the "contact with another vehicle/person/object" accident rate is probably in the 10k-100k mile range.

ChunkyThePotato

2025-07-23 23:01

Can't use that one, buddy. They count any crash that occurs within 5 seconds of Autopilot disengaging. Nice try though!

judge2020

2025-07-23 23:08

All we need is level 3 for a truly stress-free commute.

Ecsta

2025-07-23 23:42

New VW's will put on the hazards, pull over, stop, and call 911.

I assume Tesla could do the same thing (or much better) if they actually wanted to.

Terron1965

2025-07-23 23:44

I use it when I drive and like most people my driving is mainly on city streets in the town I live in.

Ecsta

2025-07-23 23:45

Have you looked at new Tesla pricing? it isn't cheap.

The Hyundai/Kia and VWAG group have amazing basic "autopilot" (not full self driving), I'd argue better than Tesla's. Obviously the FSD is in a different league, but so are the expectations and price tag.

Terron1965

2025-07-23 23:45

NTSB is all over every crash. If it were doing anything like that, it would have been released. There are also insurance companies eager to find liability all over every crash.

Its checked.

Ecsta

2025-07-23 23:46

Let's compare to cars in the same price range to see if it means anything.

I think most would agree with the statement "expensive cars crash less frequently than cheap cars", so I do think Tesla is being factual. Similarly, "BMW's crash less than average" being true, doesn't mean BMW drivers are great drivers.

Terron1965

2025-07-23 23:48

If the system disengages because it can no longer operate safely due to visabilty thats not a FSD crash. Thats FSD working as intended. There are conditions in which no one should be driving. Just because a human will get away with it 99.99% of the time doesnt mean the Tesla corp is willing to insure that risk.

Ecsta

2025-07-23 23:48

I think ALL Teslas have good/aggressive automatic emergency braking, something the vast majority of cars don't have and has been proven to significantly reduce accidents.

djao

2025-07-23 23:52

Somebody [tested this in a Tesla five years ago](https://www.youtube.com/watch?v=w9bTqm12GjM). The car does put on the hazards and come to a stop. I don't know if it will pull over (the test in the video was conducted on a street lacking an emergency lane). I'm pretty sure it doesn't call 911.

Terron1965

2025-07-23 23:52

It counts all crashes within 5 seconds of disengagement as FSD crashes.

stomicron

2025-07-23 23:59

Not to mention driver demographics

Confident-Sector2660

2025-07-24 00:09

that can't be right? Back when FSD came out it was horrible. I think it would not even go 2 miles without crashing if you didn't intervene

in 2024 when FSD did not have any highway driving that could be believable. It was "good enough" then

__saves

2025-07-24 01:01

I see what you mean. It could mean all sorts of things. I don't know what conclusions you could draw from that specifically. I myself would like a more meaningful breakdown of the autopilot into autopilot vs FSD, urban vs highway.

Ok_Length_5168

2025-07-24 01:02

Not an apples to apples comparsion. Autopilot is mostly used in highways. Most accidents (for all vehicles) occur outside highways.

ChunkyThePotato

2025-07-24 02:07

Yes, it was horrible, but that accident rate was *with supervision*, as all these accident rates are. So because people were able to intervene, the overall accident rate with FSD on just non-highway roads was better than the manual driving accident rate.

Koupers

2025-07-24 02:45

Full FSD. Full FSD appears to be basically useless outside of country roads (which is where I use it the most, road trips it's king)

BeerBaitIceAmmo

2025-07-24 02:49

at first I read this as 240,000 miles :-0

PracticlySpeaking

2025-07-24 02:53

75 miles? That's over an hour — insane!

PracticlySpeaking

2025-07-24 02:54

Wouldn't that also include Adaptive cruise control??

UFO64

2025-07-24 04:58

Where are the NTSB numbers for this?

Terron1965

2025-07-24 06:50

https://www.ntsb.gov/Pages/search.aspx#k=Tesla

grogi81

2025-07-24 08:37

Absolutely with you on that: I am of an opinion that responsible usage of FSD increases safety. Hell - stupid AP did save me once when I was tired and doze off for I don't know how long - it and kept me in lane... But it should be treated like "your trusted driving companion", not "your chauffeur".

Tesla however always hyped it as Full Self Driving. Coast to coast without interaction and other nonsense. They are also presenting the stats like "it was FSD driving and look how much better it is than humans". And this gives a lot of false expectations and encourages misuse...

It is neither Full, nor Self, nor better than humans.

I still don't unserstand why FSD, when it detects unresponsive driver, doesn't safely pull over to the side of the road and call emergency services. VW do it and I it absolutely fantastic feature that will save lifes.

Muhahahahaz

2025-07-24 13:49

I audit them on a daily basis, every time I turn on FSD

Have *you* tried it?

matt11126

2025-07-24 13:55

Yes, I have tried it numerous times in a model S, Y and 3. Just wait until it does something stupid and toggles automatic collision avoidance, you'll see FSD gets disabled instantly, hence these claims from Tesla.

Don't get me wrong it's the best level 2 system out there, but that's all it is, a level 2 system.

MushroomSaute

2025-07-24 16:24

My point was any one of those is beaten by other cars, not that they're all beaten for cheaper. You can get higher range if that's what you need, or better build quality, or just a cheaper EV - just depends on your priorities, but bottom line is that while Tesla is the best all-arounder, there are better EVs for each if you care more about one of those things. *Except* FSD, unless literally all you need is a traffic-jam driver with Mercedes.

MushroomSaute

2025-07-24 16:26

Disengagements are not the same as interventions; we were discussing interventions.

shaneucf

2025-07-24 17:24

True. Tesla definition is not the top on a lot of the items.

shaneucf

2025-07-24 17:26

Isn't model y like $50k? Can you get a Korean or VW with the same performance, space, tech, etc.? They may have some stuff better than Tesla (I doubt on VW) but overall you can't beat Tesla price for what you get.

UCanDoNEthing4_30sec

2025-07-24 17:49

Great… I guess?

I use auto pilot on long stretches of highway or stop and go traffic on a highway, all of which I’m just staying in a single lane. Auto pilot is disengaged when changing lanes in these scenarios. Therefore this really doesn’t tell me shit.

Auto pilot and FSD are great but do t throw out shit stats at me that really mean nothing.

JustAnotherMortal69

2025-07-24 18:06

AP is mostly used on freeways. They need to compare it directly with accident count per million miles on the freeway. Not fatalities or total traffic accidents on roads and freeways.

I would guess that AP is still notably safer than a human (no distractions), but not by the margins we would anticipate given that it is mostly used on freeways and stop-and-go traffic.

The report also shows Tesla drivers are actually safer than the average driver by a good like 10%, so that could also account for some of the positive miles driven on AP. The human probably knows when to take over if they are a consistent AP user.

[deleted]

2025-07-24 19:44

[deleted]

imme2372729

2025-07-24 21:08

Bro works on the moon (far side to be exact)

SRMax666

2025-07-24 22:05

The model 3 and Y are actually a mid priced vehicle half the cost of a Rivian, Lucid or Tesla Model X and Y so that comparison would be more difficult. The bigger difference between ICE and Electric regardless of price is the thoughts that goes into moving from ICE to electric.

ulmersapiens

2025-07-24 23:44

All I want is level 3 on the highway so I can use that time to not drive.

Cueller

2025-07-25 01:06

Do they count every non autopilot crash that occurs within 10 seconds of autopilot being used?

1startreknerd

2025-07-25 02:02

Sounds like some California commutes.

justinreddit1

2025-07-25 02:17

Toronto commute, very similar

Proper-Ant6196

2025-07-25 02:43

240 km every day?

GauchiAss

2025-07-25 09:29

I use self driving on my car (not a Tesla but that's not the point) more when the road is easy & long (there is no way I want to spend hours maintaining speed on a straight road) and I prefer manual driving when I know shit can happen (city center, small rural roads, ...)

These stats would need to be on similar roads to be sure the difference is caused by the driver and not the risks involved with some types of roads.

justinreddit1

2025-07-25 11:17

3 times a week

premiumcontentonly1

2025-07-25 16:27

Exactly my thoughts. AP is only activated in open roads usually by most ppl

FunnyProcedure8522

2025-07-26 01:33

Where did you get the idea that FSD would crash immediately??? FSD is built on the first principle of avoiding accident and crash first and for most, in no way it will suddenly decide to just crash or cause the crash. It might pick wrong lane or make wrong turn because of map, but on its own it will alway avoid crash. Or as the statistic shows, exceeding rare.

ronsta

2025-07-26 02:11

I have a tesla model Y with autopilot. Does it run off the same engine as FSD but only work on highways? I’m lost at this point. I do remember when I last used autopilot 3 years ago it was total shit and almost killed me.

Smaxter84

2025-07-26 20:26

Does anybody really trust any numbers relating to Tesla anymore?

Soggy-Ad-3981

2025-07-27 02:55

lmao wtf even

Soggy-Ad-3981

2025-07-27 02:55

in time sure 75 miles during rush hour is like 1.5+ fing hours

BossAnderson

2025-07-27 11:59

Is this even accurate? Anytime when I use FSD and it's about to do something stupid, I take over to avoid a crash. So, does that record that situation as a no crash?

Economy-Emergency732

2025-07-27 12:36

Every time I hear this stat i can't help myself but think if that person takes over just before an FSD accident then technically it wasn't FSD that had the accident. Always wondered how this plays into this stat.

Several_Nectarine_91

2025-07-27 13:13

FSD has improved a lot in the last couple of years. It rarely needs help, and lane selection is a lot better.

That-Television-926

2025-07-27 15:38

Let see data where FSD was used during the drive that resulted in a crash. When they say "crash while using FSD" how many crashes happened where the human canceled FSD seconds before the crash so that crash wasn't logged as a FSD crash.

thequeensegg

2025-07-27 15:39

Why would anyone trust internal numbers from Tesla when the company is known to constantly lie about its numbers and capabilities?

thequeensegg

2025-07-27 15:48

Do these numbers take into account the many documented instances of Tesla's disabling Autopilot less than a second before impact so that the crash looks to be human-caused? This is a well-reported cheat that Tesla has been using to fudge its number for years.

hokeyplayer8

2025-07-29 18:01

I get to be part of that group week 1 of owning my Model X while I watched summon turn into a pole, lucky me!

Puzzleheaded-Rush12

2025-08-01 10:28

FSD V13 is flawless in my city. Absolutely fantastic on city streets.

Meats10

2025-08-01 10:41

I used the FSD trial this year and still too many interventions. If it was flawless why are they looking into new hardware to better support FSD.

Puzzleheaded-Rush12

2025-08-01 11:09

HW4 V13 is really great. I did a 2000-mile trip, and it was fantastic. Yes, it is supervised, but it's great to sit back and relax and listen to music while it does the driving.

The new AI stack is coming for HW4, and it will increase processing depth by 10x according to info presented on the conference call.

HW5 will no doubt be used to process information quicker, but HW4 is doing a great job with FSD V13.

I drove at night through construction zones with narrowed lanes and anomalous lane markings, FSD did the job all by itself.

ku8475

2025-08-05 03:01

I wish Tesla would cave on the lidar already. Its so cheap now and would solidify their fsd. Just makes sense now.

Exact-Dragonfruit480

2025-09-18 20:46

In the midwest? Not in my part of the midwest. If you live in the same town/city that you work in, there's no need for your commute to be that far.